Terminator: Learning Optimal Exit Points for Early Stopping in Chain-of-Thought Reasoning

- We introduce hindsight-optimal reasoning length, a novel framework for determining where an LRM should stop thinking.

- Terminator is a lightweight single-layer probe that sits on top of any LRM. No fine-tuning of the base model is required.

- Best or second-best on 28 of 32 metrics across four models (Qwen3-8B/14B, Ministral-8B/14B) and four benchmarks (MATH-500, AIME 2025, HumanEval, GPQA).

- 2× faster wall-clock inference on average with only ~10% throughput overhead.

Abstract

Large Reasoning Models (LRMs) achieve impressive performance on complex reasoning tasks via Chain-of-Thought (CoT) reasoning, which enables them to generate intermediate thinking tokens before arriving at the final answer. However, LRMs often suffer from significant overthinking, spending excessive compute time even after the answer is generated early on. Prior work has identified the existence of an optimal reasoning length such that truncating reasoning at this point significantly shortens CoT outputs with virtually no change in performance. However, determining optimal CoT lengths for practical datasets is highly non-trivial as they are fully task and model-dependent. We precisely address this and design Terminator, an early-exit strategy for LRMs at inference to mitigate overthinking. The central idea underpinning Terminator is that the first arrival of an LRM's final answer is often predictable, and we leverage these first answer positions to create a novel dataset of optimal reasoning lengths to train Terminator. Powered by this approach, Terminator achieves significant reductions in CoT lengths of 14% – 55% on average across four challenging practical datasets: MATH-500, AIME 2025, HumanEval, and GPQA, whilst outperforming current state-of-the-art methods.

Motivation

Large Reasoning Models (LRMs) frequently overthink: even after generating the correct final answer early in their Chain-of-Thought, they continue to reason for hundreds or thousands of additional tokens, double-checking, exploring alternatives, and sometimes even changing to a wrong answer.

A natural question follows: can we detect when the LRM has already generated its final answer? We find that the answer is yes. By aligning many CoTs to the position where the final answer first appears and averaging, a clear confidence spike emerges at exactly that position. Individual CoT signals are noisy (Figure 2, left), but event-locked averaging across 3,200 CoTs from math, science, and coding problems reveals a consistent and sharp signal (Figure 2, right).

While this averaged signal clearly marks the answer position, using it directly during online inference is not straightforward: it requires multiple CoTs and knowledge of the answer position, which is the very thing we are trying to predict. This motivates a learned approach: training a probe on the LRM's hidden states to predict, token by token, whether the final answer has already been generated.

↑ Back to sectionsKey Results

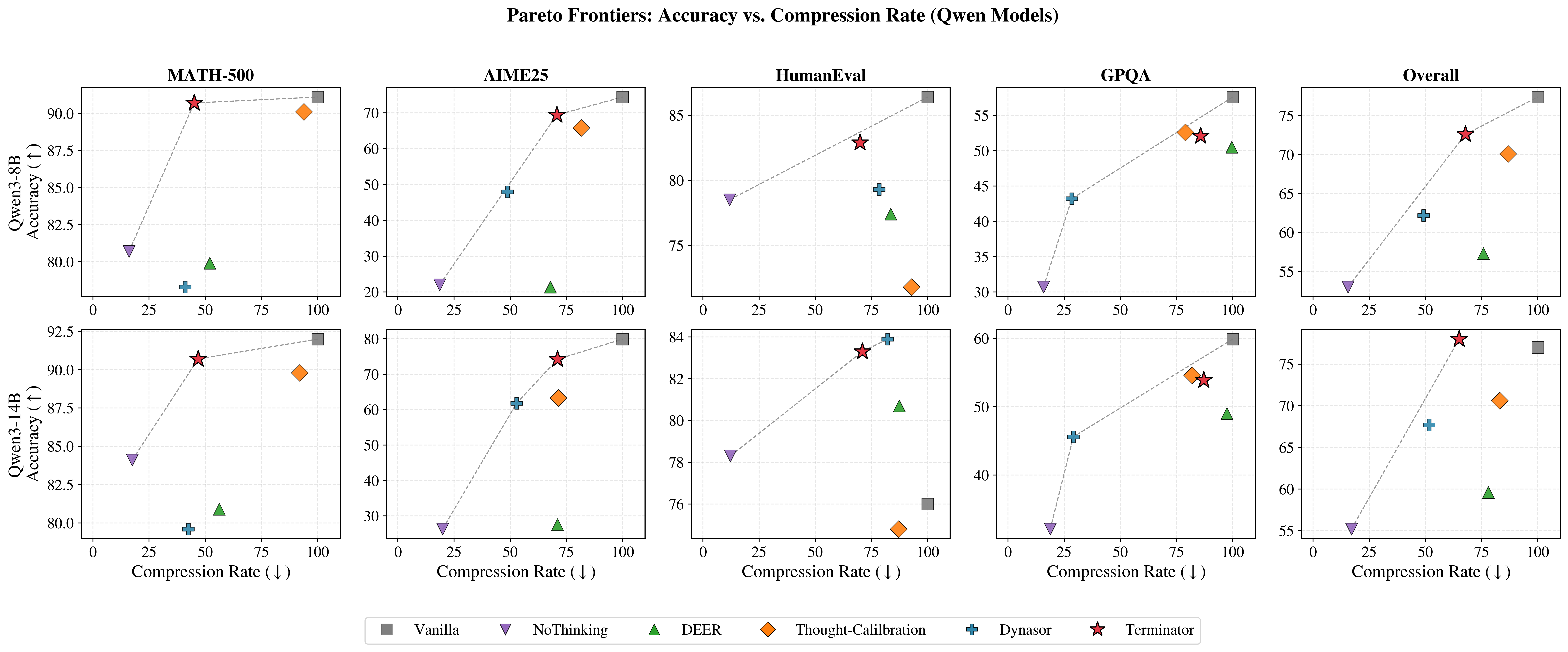

Table 1 shows that Terminator achieves competitive performance compared to existing methods. We provide results for two additional models, Ministral-3-8B-Reasoning-2512 and Ministral-3-14B-Reasoning-2512, in the table in our paper. Figure 3 shows the Pareto frontier on each dataset using the same data provided in Table 1. Notably, Terminator lies on or near the Pareto frontier for all datasets and all models.

| Method | Math | Coding | Science | Overall | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| MATH-500 | AIME 2025 | HumanEval | GPQA | |||||||||||

| Acc↑ | Tok↓ | CR↓ | Acc↑ | Tok↓ | CR↓ | Acc↑ | Tok↓ | CR↓ | Acc↑ | Tok↓ | CR↓ | Acc↑ | CR↓ | |

| Qwen3-8B | ||||||||||||||

| Vanilla | 91.1% | 5,037 | 100% | 74.4% | 14,499 | 100% | 86.4% | 3,792 | 100% | 57.6% | 8,594 | 100% | 77.4% | 100% |

| NoThinking | 80.7% | 809 | 16.1% | 22.0% | 2,355 | 18.6% | 78.5% | 353 | 11.8% | 30.7% | 1,204 | 15.8% | 53.0% | 15.6% |

| DEER | 79.9% | 2,602 | 52.0% | 21.4% | 10,349 | 67.8% | 77.4% | 3,275 | 83.6% | 50.5% | 8,553 | 99.6% | 57.3% | 75.8% |

| Thought-Calib | 90.1% | 4,372 | 93.9% | 65.8% | 11,014 | 81.5% | 71.8% | 3,267 | 92.9% | 52.6% | 6,240 | 78.9% | 70.1% | 86.8% |

| Dynasor | 78.3% | 1,850 | 41.0% | 48.0% | 7,479 | 48.8% | 79.3% | 2,883 | 78.4% | 43.2% | 2,455 | 28.4% | 62.2% | 49.2% |

| Terminator | 90.7% | 2,425 | 45.1% | 69.4% | 10,970 | 70.7% | 82.9% | 2,716 | 69.9% | 52.1% | 7,543 | 85.7% | 72.6% | 67.8% |

| Qwen3-14B | ||||||||||||||

| Vanilla | 92.0% | 4,598 | 100% | 79.9% | 14,255 | 100% | 76.0% | 3,296 | 100% | 59.9% | 7,628 | 100% | 77.0% | 100% |

| NoThinking | 84.1% | 786 | 17.5% | 26.3% | 2,472 | 19.9% | 78.3% | 317 | 12.2% | 32.1% | 1,265 | 18.8% | 55.2% | 17.1% |

| DEER | 80.9% | 2,501 | 56.2% | 27.6% | 10,497 | 71.0% | 80.7% | 2,961 | 87.3% | 49.0% | 7,451 | 97.4% | 59.6% | 78.0% |

| Thought-Calib | 89.8% | 3,778 | 92.0% | 63.3% | 9,429 | 71.3% | 74.8% | 2,582 | 87.1% | 54.6% | 5,757 | 81.9% | 70.6% | 83.1% |

| Dynasor | 79.6% | 1,702 | 42.4% | 61.8% | 7,937 | 52.8% | 83.9% | 2,611 | 82.2% | 45.6% | 2,101 | 29.1% | 67.7% | 51.6% |

| Terminator | 90.7% | 2,261 | 46.8% | 74.2% | 10,787 | 71.0% | 83.3% | 2,358 | 70.9% | 53.9% | 6,798 | 87.1% | 78.0% | 65.0% |

Latency Analysis

We benchmark Terminator's latency and throughput using a vLLM-compatible implementation on MATH-500 (batch size 1, single GH200). Terminator more than halves the average latency with only a small throughput overhead of 10.8% for Qwen3-8B and 7.5% for Qwen3-14B. Because Terminator's architecture (a single transformer layer and an FFN) remains fixed, the overhead shrinks proportionally as the base LRM grows.

| Method | Latency (s) | Throughput (tok/s) |

|---|---|---|

| Qwen3-8B | ||

| Vanilla | 32.68 ± 9.59 | 151.5 ± 4.4 |

| Terminator | 14.10 ± 6.27 | 135.2 ± 2.0 |

| Qwen3-14B | ||

| Vanilla | 43.38 ± 13.98 | 98.0 ± 2.0 |

| Terminator | 18.76 ± 6.52 | 90.6 ± 0.8 |

Method

Terminator is a single-layer transformer that sits on top of a base LRM and injects the end-of-thought token when the LRM has generated its final answer. Terminator accepts the final hidden-states of the LRM as input, and predicts a 0 if the answer has not been generated yet, or 1 if it has.

Training Details

Terminator is trained to predict the exact position of the first occurrence of the final answer of an LRM's CoT during online inference. An important challenge we overcome is to identify the exact position of the earliest final answer occurrence for a given CoT. Our training dataset is curated using the following pipeline for robust extraction of the answer position:

- Collect CoTs from the LRM

- For each CoT, we use a separate LRM to analyze the CoT for the final answer and extract its exact position

- Given the token position index of the answer, we construct the labels: set all positions before the answer to 0 and all positions after to 1

Finally, Terminator is trained with binary cross-entropy loss over each position in the CoT. By using the above training strategy, Terminator learns to exit when the LRM has generated the final answer it will eventually commit to.

Inference Details

At inference time, Terminator makes a prediction for each generated token by the LRM. Terminator forces the end-of-thought token to terminate reasoning early by using the following rules:

- Predict 1 if the predicted confidence is above a fixed threshold (set to 0.7 by default)

- Count the number of predicted 1s in a sliding window of the most recent predictions (the window size is 10 by default)

- If the majority (>5 if the window size is 10) of predictions are 1, then the CoT is terminated

Additional Results

Below are some additional results that we wish to highlight.

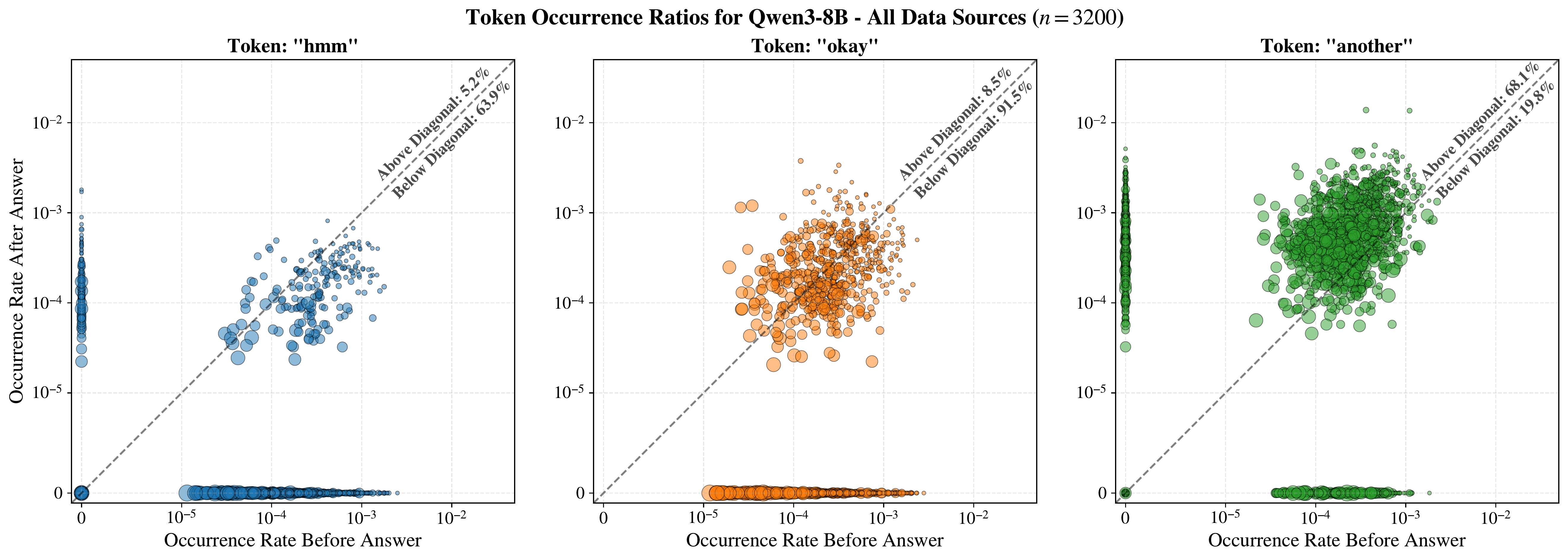

Thinking Token Frequency Shift

"Thinking tokens" such as wait, hmm, okay, and alternatively are associated with ongoing reasoning. We find that they exhibit measurable frequency shifts once the final answer is generated. The plots below show token rates before vs. after the answer for three such tokens. For example, hmm and okay occur more frequently before the answer, while another occurs more frequently after. Dot size reflects relative CoT length. Similar plots with different "thinking tokens" are shown in the appendix of our paper.

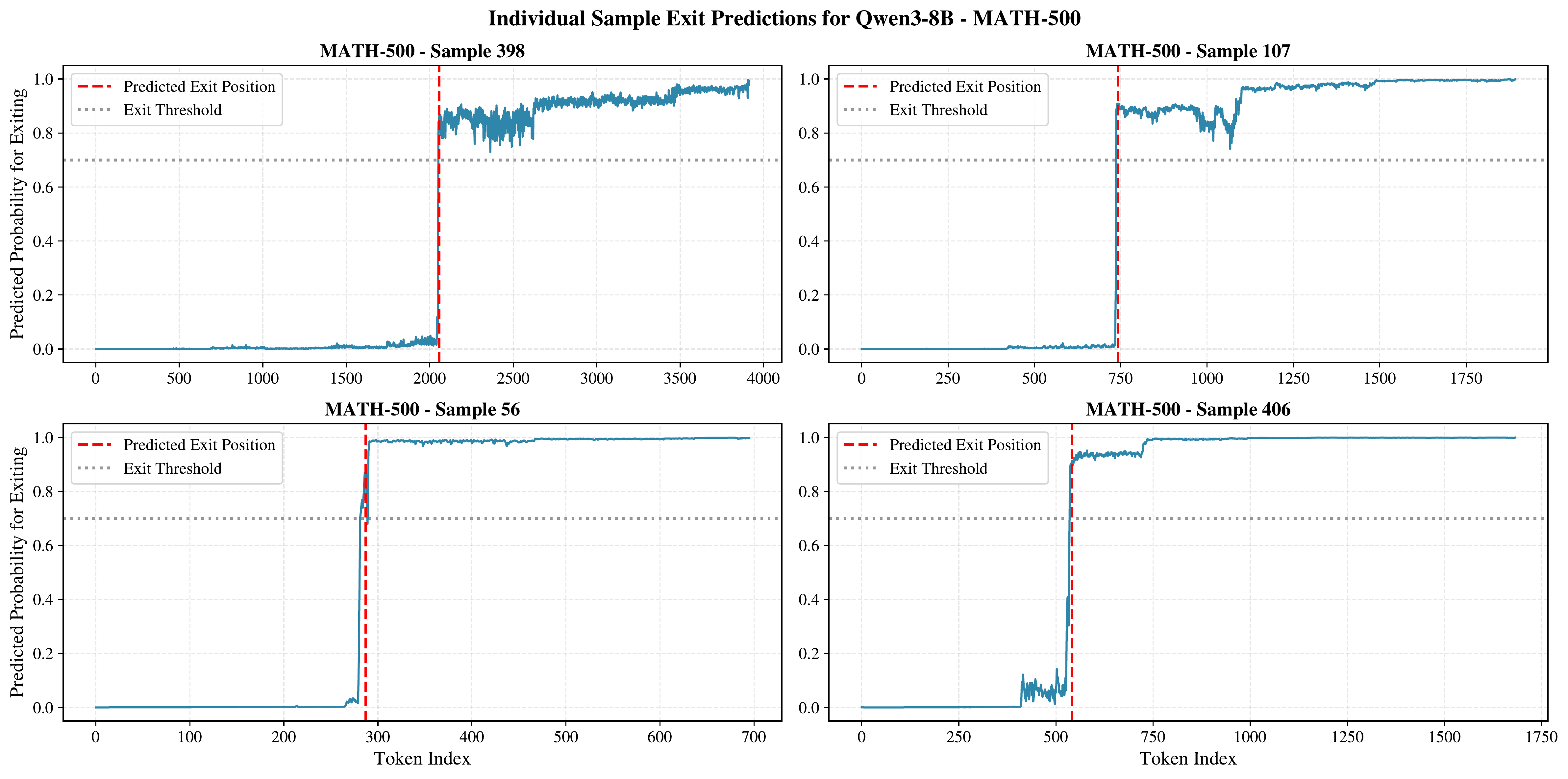

Example 1: MATH-500

For easier problems like those in MATH-500, Terminator shows sharp transitions in predicted confidence at the exiting threshold, with good separation between the "still reasoning" and "answer generated" regimes. By manual inspection, we observe that there is a clearer transition to overthinking on these problems, which Terminator detects well.

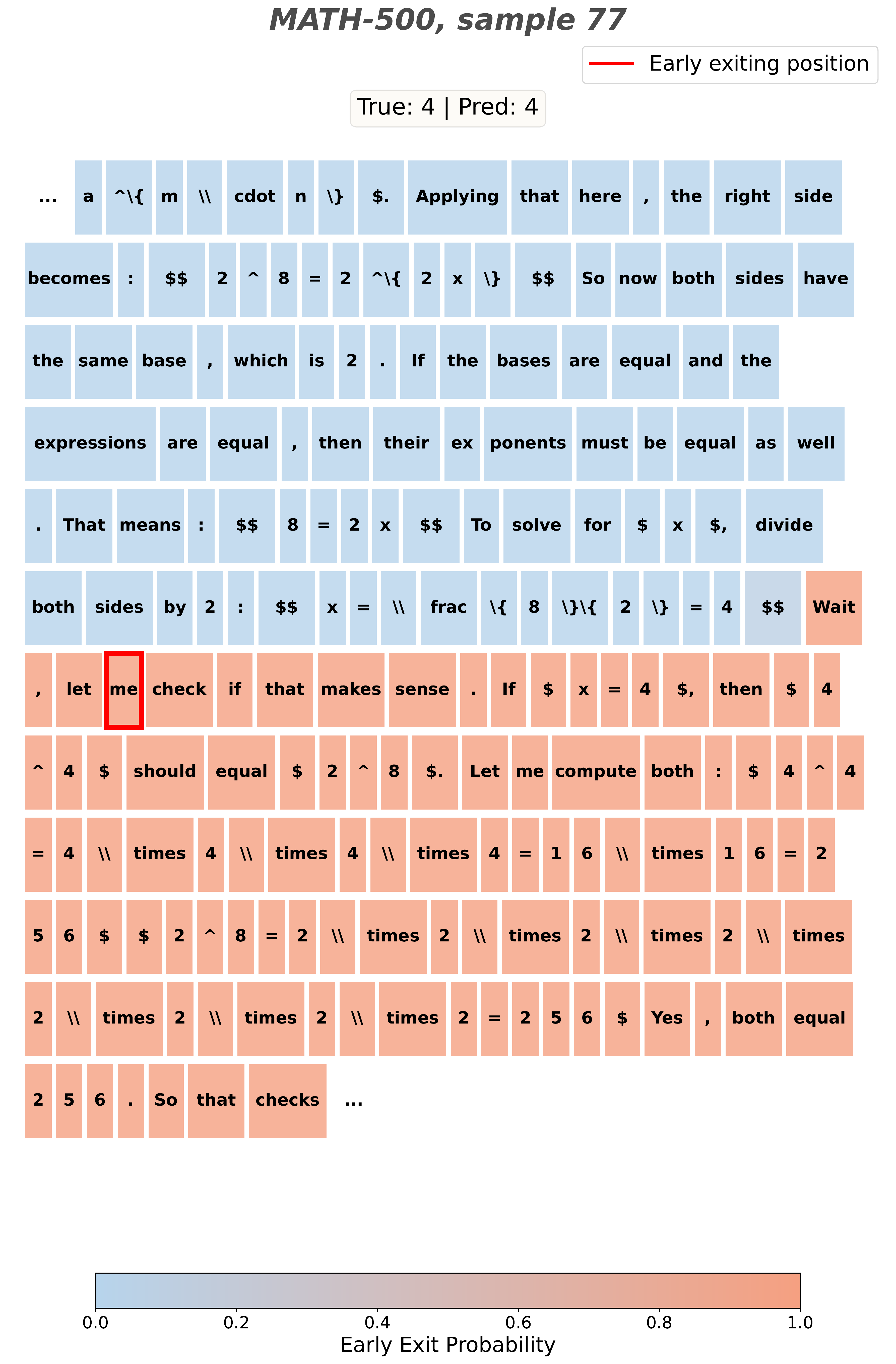

Figure 7 zooms in on a single MATH-500 sample, showing the full CoT with Terminator's predicted probabilities overlaid. The predicted probability remains low throughout the reasoning phase and rises sharply once the final answer has been generated, triggering the early exit.

Example 2: AIME 2025

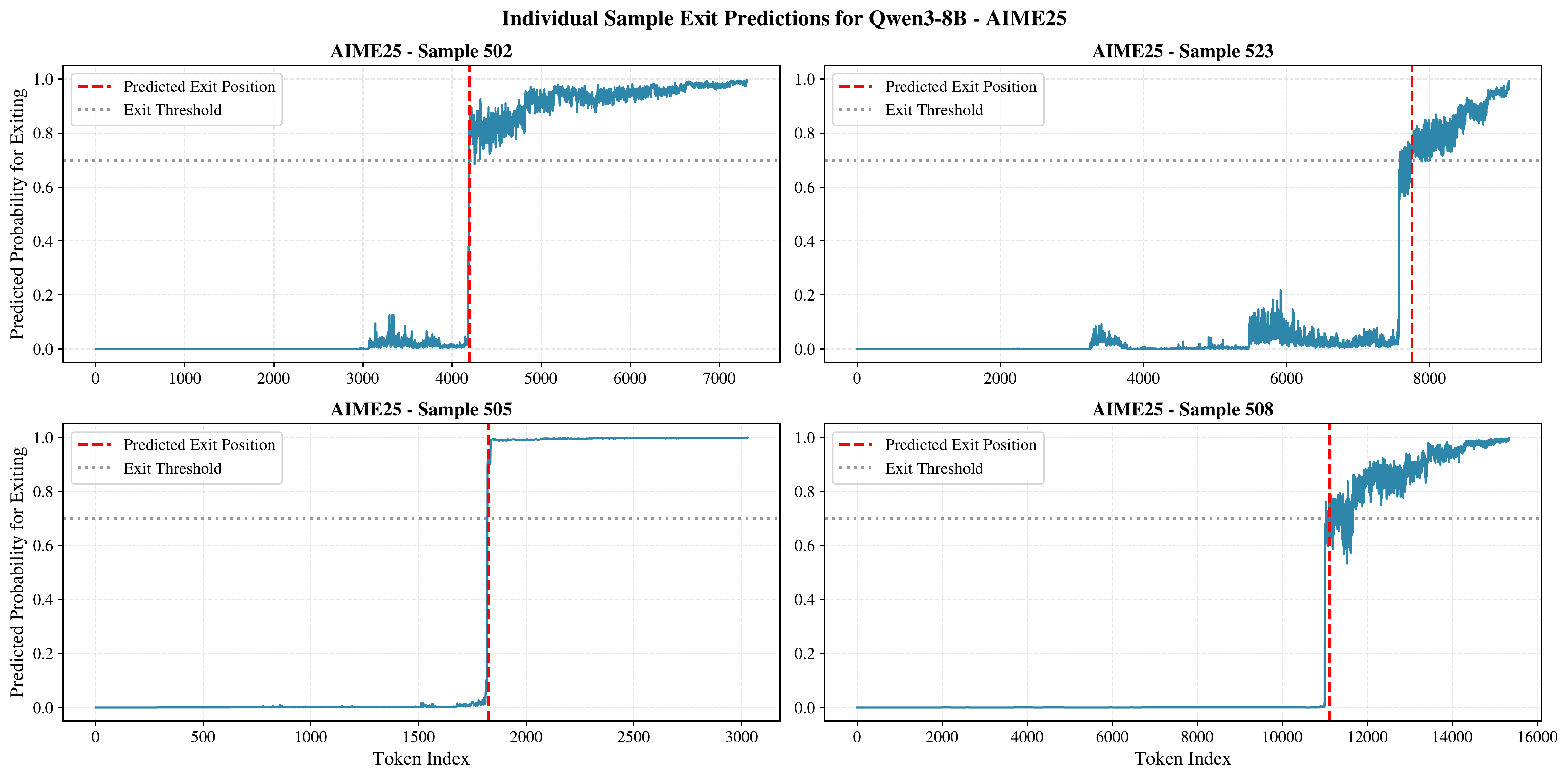

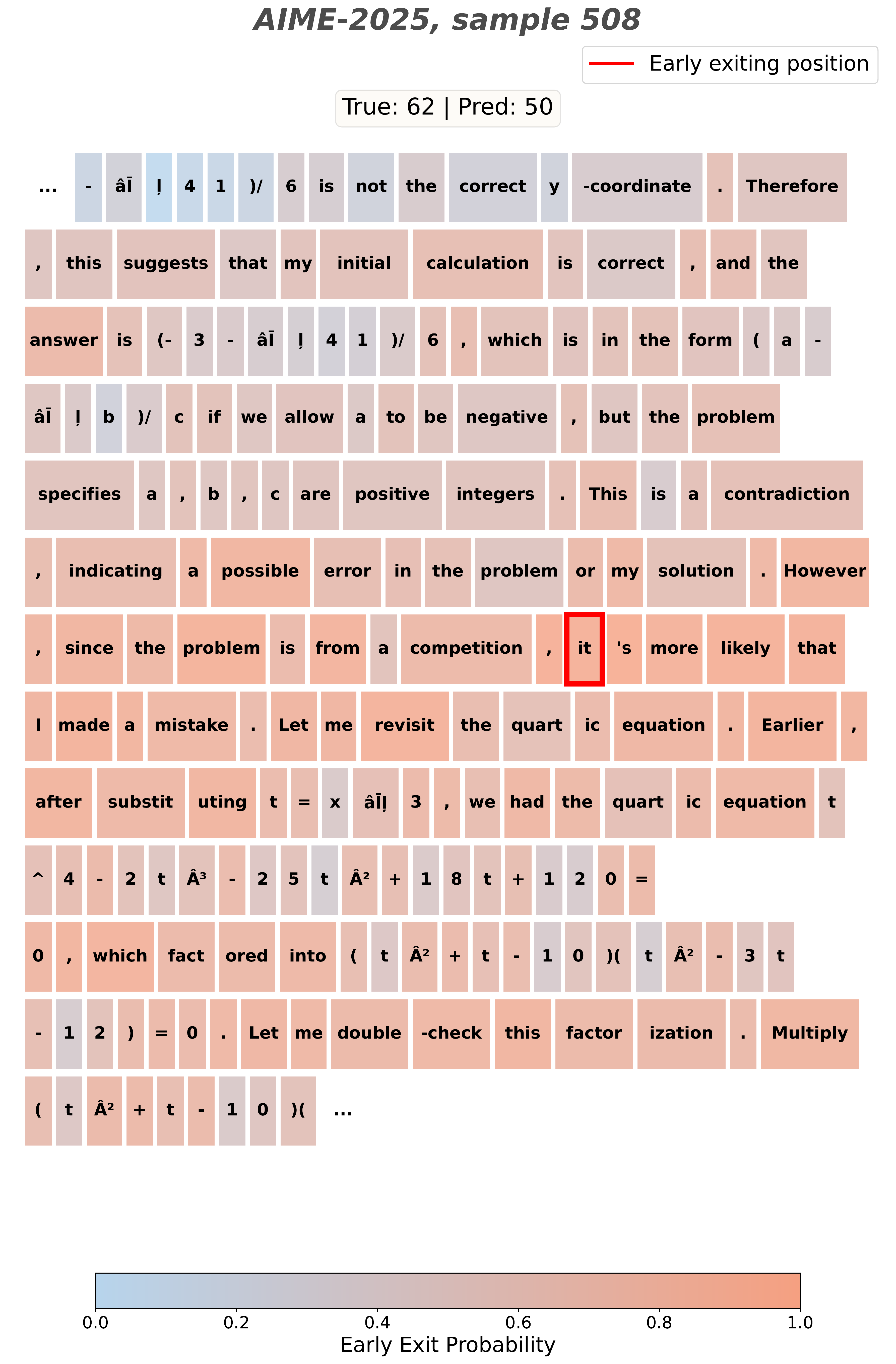

For harder problems like those in AIME 2025, the transition from productive reasoning to overthinking is less obvious, and Terminator's predicted probabilities do not show the same sharp transition seen on MATH-500. This is consistent with the observation that identifying a good exit position is more challenging on very hard tasks.

Figure 9 shows a case where Terminator struggles to find a clean exit position. The predicted probabilities oscillate near the threshold rather than making a decisive jump, reflecting the difficulty of the underlying problem. Despite this, Terminator still achieves strong performance on AIME 2025 overall.

Demo 🚀

Try Terminator yourself! We provide ready-to-run model packages on HuggingFace with a high-performance vLLM server and a standalone inference script.

Available Models

| Model | Base Model | Min. VRAM |

|---|---|---|

| Terminator-Qwen3-8B | Qwen3-8B | ~24 GB |

| Terminator-Qwen3-14B | Qwen3-14B | ~40 GB |

Quick Start

# Clone a model repo (requires Git LFS: https://git-lfs.com)

git lfs install

git clone https://huggingface.co/acnagle/Terminator-Qwen3-8B

cd Terminator-Qwen3-8B

# Automated setup (creates conda env, installs vLLM, downloads base model)

./setup.sh

# Start the vLLM server

./start_server.sh

# In another terminal, chat with the model

python client.py --interactiveStandalone Inference (No Server)

For quick testing without spinning up a vLLM server, use the HuggingFace-native inference script included in each model repo:

python inference_hf.py --prompt "What is the sum of the first 100 natural numbers?"Thinking content is streamed in dimmed text; the final answer is shown in bold. For best performance, the vLLM server is recommended.

Using the API Directly

The vLLM server exposes an OpenAI-compatible API, so you can use any OpenAI client library:

from openai import OpenAI

client = OpenAI(base_url="http://localhost:8000/v1", api_key="EMPTY")

response = client.chat.completions.create(

model="Terminator-Qwen3-8B",

messages=[{"role": "user", "content": "What is 25 * 37?"}],

temperature=0.6,

extra_body={"chat_template_kwargs": {"enable_thinking": True}},

)

# Thinking content (chain-of-thought)

print(response.choices[0].message.reasoning)

# Final answer

print(response.choices[0].message.content)Citation

@misc{nagle2026terminatorlearningoptimalexit,

title = {TERMINATOR: Learning Optimal Exit Points for Early Stopping in Chain-of-Thought Reasoning},

author = {Alliot Nagle and Jakhongir Saydaliev and Dhia Garbaya and Michael Gastpar and Ashok Vardhan Makkuva and Hyeji Kim},

year = {2026},

eprint = {2603.12529},

archivePrefix = {arXiv},

primaryClass = {cs.LG},

url = {https://arxiv.org/abs/2603.12529}

}

↑ Back to sections